POSTS

POSTS

POSTS

Don't Resist AI. But Don't Forget Your Hands and Eye Still Matter

2026 is shaping up to be really interesting. More new tools are embracing AI, like Pencil, Paper. Designers are exploring new ways of designing by prompting, whether that's typing or even using voice as an option, now that Claude Code has a voice mode.

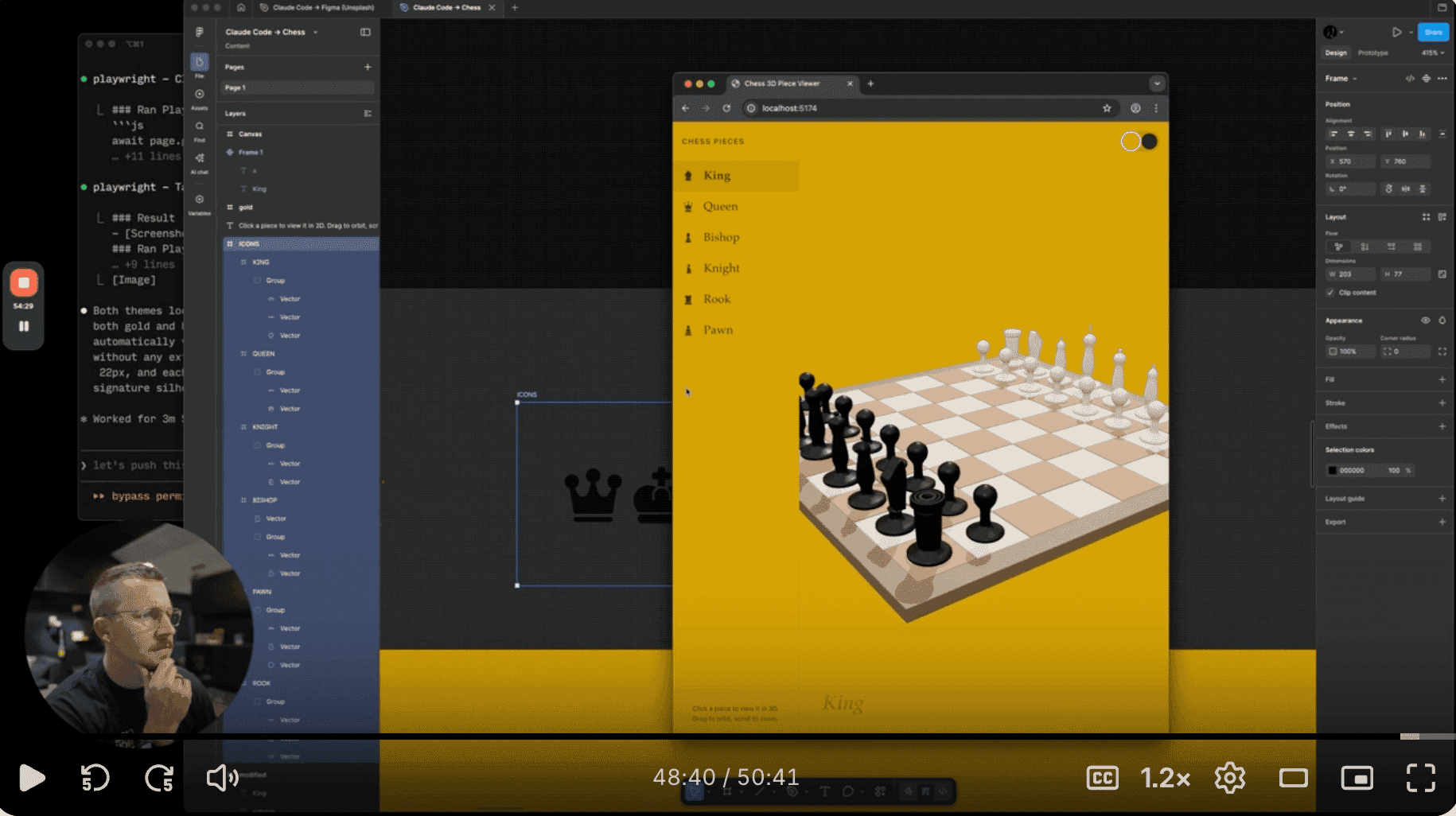

About a month ago, Figma followed the same trajectory by including prompt-based design. You prompt in Claude Code and bring it into Figma. Personally, I find the workflow still a bit clunky. It codes first, then I need to prompt again if I want to push it to Figma. Compare that to Paper, which immediately makes the UI right on the canvas.

Matt D. Smith (MDS) shared his walkthrough on how to use Claude Code → Figma. He showed something practical, a real workflow in action. That gave me an idea: designers shouldn't resist using AI. We can benefit from it to get up to speed, produce first drafts and explore ideas faster. But also, relying on prompting 100% is not enough. Sometimes your hands are still faster. And your eyes and instinct, trained by UI fundamentals like typography, spacing, and visual hierarchy, are what make good design GREAT.

The combination of AI speed and solid craft knowledge is what can really elevate our work.

Showing “Now Playing” from the Spotify API in Framer

What do you add to a portfolio website besides about you, our work and credentials? For me, I want my portfolio to feel personal. It should reflect me.

One of my inspirations was Lee Robinson. A couple of years ago, he added little live stats to his site. He doesn’t do it anymore though. But, that gave me an idea: a personal site can be more than static pages. By connecting to APIs, it can become dynamic. It can reflect live data from the products and services you use.

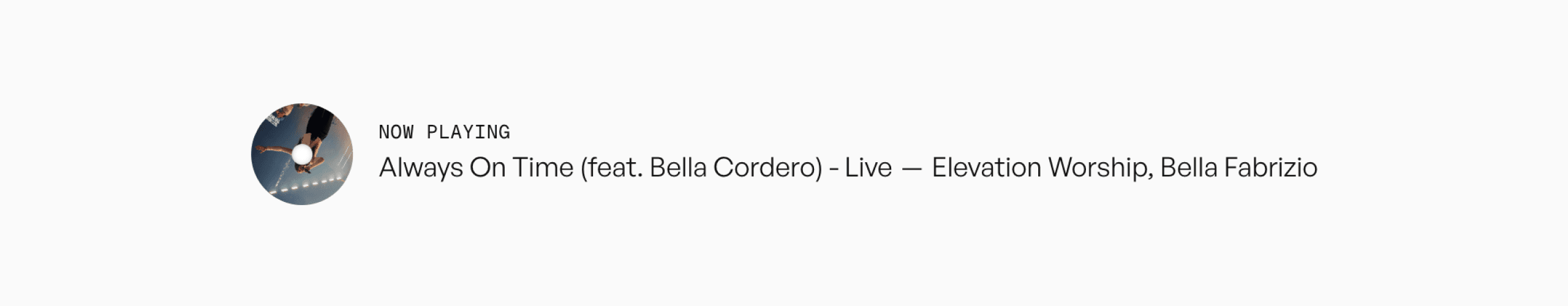

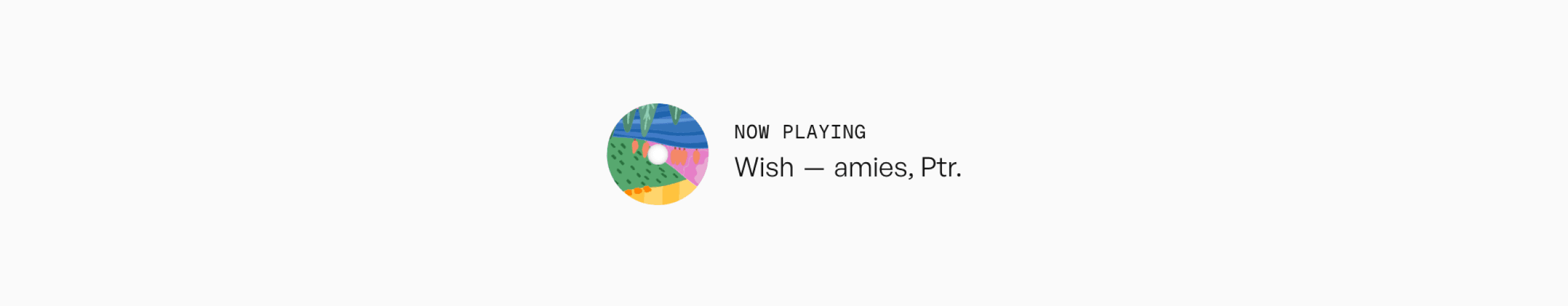

Here’s one idea. Show what’s currently playing on my Spotify. It displays the track title, artist, and album art in real time.

I like it a lot. It’s a small detail, but it brings personality to the site, especially showing the album art. It adds texture and color to a website that’s mostly black and white. And because it’s connected to the Spotify API, it updates automatically whenever the song changes.

Thanks to Claude Code, ideas like this move much faster now. I gave it a prompt, adjusted a few details, and ended up with a working React component in Framer that fetches data from an endpoint on my domain. I host the endpoint as a small serverless function on Vercel. My traffic is low, so the free tier is more than enough.

Here's how the framer react component looks like:

Some variations you can get from customizing the properties:

And the empty state…

Then loading state…

If you’re curious, here’s the source code.

If you have no idea how to set it up, copy and paste the article and the code into Claude Code or Codex and ask it to wire it up for you

import { addPropertyControls, ControlType } from "framer" import { useEffect, useState } from "react" interface NowPlayingData { albumImageUrl: string title: string artist: string songUrl: string loaded: boolean } type Layout = "compact" | "spacious" type AlbumStyle = "square" | "cd" interface Props { apiUrl: string layout: Layout label: { text: string color: string font: object } track: { titleColor: string artistColor: string separator: string font: object } albumArt: { show: boolean style: AlbumStyle } content: { silenceText: string loadingText: string } } const layoutConfig = { compact: { albumSize: 32, albumRadius: 4, labelSize: 10, titleSize: 12, artistSize: 12, gap: 8, innerGap: 5, labelGap: 2, }, spacious: { albumSize: 64, albumRadius: 10, labelSize: 12, titleSize: 20, artistSize: 20, gap: 16, innerGap: 6, labelGap: 4, }, } const ANIMATIONS = ` @keyframes marquee-scroll { 0% { transform: translateX(0); } 100% { transform: translateX(-50%); } } @keyframes cd-spin { from { transform: rotate(0deg); } to { transform: rotate(360deg); } } @keyframes skeleton-shine { 0% { background-position: -200% 0; } 100% { background-position: 200% 0; } } ` export default function SpotifyNowPlaying({ apiUrl, layout, label, track, albumArt, content, }: Props) { const [imgLoaded, setImgLoaded] = useState(false) const [data, setData] = useState<NowPlayingData>({ albumImageUrl: "", title: "", artist: "", songUrl: "", loaded: false, }) useEffect(() => { fetch(apiUrl) .then((res) => res.json()) .then((json) => { const hasTrackData = json.isPlaying && json.title && json.songUrl if (hasTrackData) { setData({ albumImageUrl: json.albumImageUrl, title: json.title, artist: json.artist, songUrl: json.songUrl, loaded: true, }) } else { setData({ albumImageUrl: "", title: content.silenceText, artist: "", songUrl: "", loaded: true, }) } }) .catch(() => { setData({ albumImageUrl: "", title: "Couldn't load now playing", artist: "", songUrl: "", loaded: true, }) }) }, [apiUrl, content.silenceText]) useEffect(() => { setImgLoaded(false) }, [data.albumImageUrl]) const { albumSize, albumRadius, labelSize, titleSize, artistSize, gap, innerGap, labelGap, } = layoutConfig[layout] ?? layoutConfig.compact const trackTitle = data.loaded ? data.title : content.loadingText const hasArtist = data.title.trim() !== "" && data.artist.trim() !== "" // Speed: roughly 1s per 4 chars, min 6s const marqueeDuration = Math.max( 6, (trackTitle.length + (data.artist?.length ?? 0)) * 0.25 ) const handleClick = () => { if (data.songUrl) window.open(data.songUrl, "_blank") } const isCD = albumArt.style === "cd" const imgBorderRadius = isCD ? "50%" : albumRadius // Show album art area only while loading (skeleton) or when a song is playing const showAlbumArea = albumArt.show && (!data.loaded || !!data.albumImageUrl) return ( <> <style>{ANIMATIONS}</style> <div onClick={handleClick} style={{ display: "flex", flexDirection: "row", alignItems: "center", gap, cursor: data.songUrl ? "pointer" : "default", width: "100%", height: "100%", overflow: "hidden", }} > {/* Album art */} {showAlbumArea && ( <div style={{ position: "relative", width: albumSize, height: albumSize, flexShrink: 0, borderRadius: imgBorderRadius, overflow: "hidden", }} > {/* Skeleton — only while fetching or image not yet loaded */} {(!data.loaded || !imgLoaded) && ( <div style={{ position: "absolute", inset: 0, background: "linear-gradient(90deg, #d0d0d0 25%, #e8e8e8 50%, #d0d0d0 75%)", backgroundSize: "200% 100%", animationName: "skeleton-shine", animationDuration: "1.5s", animationTimingFunction: "linear", animationIterationCount: "infinite", }} /> )} {/* Image */} {data.albumImageUrl && ( <img src={data.albumImageUrl} alt={`${data.title} album art`} onLoad={() => setImgLoaded(true)} style={{ width: albumSize, height: albumSize, objectFit: "cover", display: "block", opacity: imgLoaded ? 1 : 0, animationName: isCD ? "cd-spin" : "none", animationDuration: "5s", animationTimingFunction: "linear", animationIterationCount: "infinite", }} /> )} {/* CD center hole */} {isCD && imgLoaded && ( <div style={{ position: "absolute", top: "50%", left: "50%", transform: "translate(-50%, -50%)", width: albumSize * 0.2, height: albumSize * 0.2, borderRadius: "50%", background: "white", boxShadow: "inset 0 0 3px rgba(0,0,0,0.25)", pointerEvents: "none", }} /> )} </div> )} {/* Text column */} <div style={{ display: "flex", flexDirection: "column", gap: labelGap, overflow: "hidden", flex: 1, minWidth: 0, }} > {/* Now playing label */} <span style={{ fontSize: labelSize, color: label.color, opacity: 1, whiteSpace: "nowrap", textTransform: "uppercase", letterSpacing: "0.06em", ...label.font, }} > {label.text} </span> {/* Compact: inline marquee (disabled for silence) */} {layout === "compact" ? ( <div style={{ overflow: "hidden", width: "100%" }}> <div style={{ display: "inline-flex", alignItems: "center", whiteSpace: "nowrap", animationName: data.songUrl ? "marquee-scroll" : "none", animationDuration: `${marqueeDuration}s`, animationTimingFunction: "linear", animationIterationCount: "infinite", }} > {/* Duplicate for seamless loop */} {(data.songUrl ? [0, 1] : [0]).map((i) => ( <span key={i} style={{ display: "inline-flex", alignItems: "center", gap: innerGap, paddingRight: 15, }} > <span style={{ fontSize: titleSize, color: track.titleColor, ...track.font, }} > {trackTitle} </span> {hasArtist && ( <> <span style={{ fontSize: titleSize, color: track.titleColor, ...track.font, }} > {track.separator} </span> <span style={{ fontSize: artistSize, color: track.artistColor, ...track.font, }} > {data.artist} </span> </> )} </span> ))} </div> </div> ) : ( /* Spacious: inline, no marquee */ <div style={{ display: "flex", flexDirection: "row", alignItems: "center", gap: innerGap, overflow: "hidden", }} > <span style={{ fontSize: titleSize, color: track.titleColor, whiteSpace: "nowrap", overflow: "hidden", textOverflow: "ellipsis", flexShrink: 0, ...track.font, }} > {trackTitle} </span> {hasArtist && ( <> <span style={{ fontSize: titleSize, color: track.titleColor, flexShrink: 0, ...track.font, }} > {track.separator} </span> <span style={{ fontSize: artistSize, color: track.artistColor, whiteSpace: "nowrap", overflow: "hidden", textOverflow: "ellipsis", ...track.font, }} > {data.artist} </span> </> )} </div> )} </div> </div> </> ) } SpotifyNowPlaying.defaultProps = { apiUrl: "https://[yourdomain]/api/now-playing", layout: "compact", label: { text: "Now Playing", color: "#000000", font: {}, }, track: { titleColor: "#000000", artistColor: "#000000", separator: "—", font: {}, }, albumArt: { show: true, style: "square", }, content: { silenceText: "I'm currently enjoying the silence", loadingText: "Loading...", }, } addPropertyControls(SpotifyNowPlaying, { apiUrl: { type: ControlType.String, title: "API URL", }, layout: { type: ControlType.Enum, title: "Layout", options: ["compact", "spacious"], optionTitles: ["Compact", "Spacious"], }, albumArt: { type: ControlType.Object, title: "Album Art", controls: { show: { type: ControlType.Boolean, title: "Show", }, style: { type: ControlType.Enum, title: "Style", options: ["square", "cd"], optionTitles: ["Square", "Spinning CD"], hidden: (props: { show: boolean }) => !props.show, }, }, }, label: { type: ControlType.Object, title: "Label", controls: { text: { type: ControlType.String, title: "Text", }, color: { type: ControlType.Color, title: "Color", }, font: { type: ControlType.Font, title: "Font", controls: "extended", }, }, }, track: { type: ControlType.Object, title: "Track", controls: { titleColor: { type: ControlType.Color, title: "Title Color", }, artistColor: { type: ControlType.Color, title: "Artist Color", }, separator: { type: ControlType.String, title: "Separator", }, font: { type: ControlType.Font, title: "Font", controls: "extended", }, }, }, content: { type: ControlType.Object, title: "Content", controls: { silenceText: { type: ControlType.String, title: "Silence Text", }, loadingText: { type: ControlType.String, title: "Loading Text", }, }, }, })

import { addPropertyControls, ControlType } from "framer" import { useEffect, useState } from "react" interface NowPlayingData { albumImageUrl: string title: string artist: string songUrl: string loaded: boolean } type Layout = "compact" | "spacious" type AlbumStyle = "square" | "cd" interface Props { apiUrl: string layout: Layout label: { text: string color: string font: object } track: { titleColor: string artistColor: string separator: string font: object } albumArt: { show: boolean style: AlbumStyle } content: { silenceText: string loadingText: string } } const layoutConfig = { compact: { albumSize: 32, albumRadius: 4, labelSize: 10, titleSize: 12, artistSize: 12, gap: 8, innerGap: 5, labelGap: 2, }, spacious: { albumSize: 64, albumRadius: 10, labelSize: 12, titleSize: 20, artistSize: 20, gap: 16, innerGap: 6, labelGap: 4, }, } const ANIMATIONS = ` @keyframes marquee-scroll { 0% { transform: translateX(0); } 100% { transform: translateX(-50%); } } @keyframes cd-spin { from { transform: rotate(0deg); } to { transform: rotate(360deg); } } @keyframes skeleton-shine { 0% { background-position: -200% 0; } 100% { background-position: 200% 0; } } ` export default function SpotifyNowPlaying({ apiUrl, layout, label, track, albumArt, content, }: Props) { const [imgLoaded, setImgLoaded] = useState(false) const [data, setData] = useState<NowPlayingData>({ albumImageUrl: "", title: "", artist: "", songUrl: "", loaded: false, }) useEffect(() => { fetch(apiUrl) .then((res) => res.json()) .then((json) => { const hasTrackData = json.isPlaying && json.title && json.songUrl if (hasTrackData) { setData({ albumImageUrl: json.albumImageUrl, title: json.title, artist: json.artist, songUrl: json.songUrl, loaded: true, }) } else { setData({ albumImageUrl: "", title: content.silenceText, artist: "", songUrl: "", loaded: true, }) } }) .catch(() => { setData({ albumImageUrl: "", title: "Couldn't load now playing", artist: "", songUrl: "", loaded: true, }) }) }, [apiUrl, content.silenceText]) useEffect(() => { setImgLoaded(false) }, [data.albumImageUrl]) const { albumSize, albumRadius, labelSize, titleSize, artistSize, gap, innerGap, labelGap, } = layoutConfig[layout] ?? layoutConfig.compact const trackTitle = data.loaded ? data.title : content.loadingText const hasArtist = data.title.trim() !== "" && data.artist.trim() !== "" // Speed: roughly 1s per 4 chars, min 6s const marqueeDuration = Math.max( 6, (trackTitle.length + (data.artist?.length ?? 0)) * 0.25 ) const handleClick = () => { if (data.songUrl) window.open(data.songUrl, "_blank") } const isCD = albumArt.style === "cd" const imgBorderRadius = isCD ? "50%" : albumRadius // Show album art area only while loading (skeleton) or when a song is playing const showAlbumArea = albumArt.show && (!data.loaded || !!data.albumImageUrl) return ( <> <style>{ANIMATIONS}</style> <div onClick={handleClick} style={{ display: "flex", flexDirection: "row", alignItems: "center", gap, cursor: data.songUrl ? "pointer" : "default", width: "100%", height: "100%", overflow: "hidden", }} > {/* Album art */} {showAlbumArea && ( <div style={{ position: "relative", width: albumSize, height: albumSize, flexShrink: 0, borderRadius: imgBorderRadius, overflow: "hidden", }} > {/* Skeleton — only while fetching or image not yet loaded */} {(!data.loaded || !imgLoaded) && ( <div style={{ position: "absolute", inset: 0, background: "linear-gradient(90deg, #d0d0d0 25%, #e8e8e8 50%, #d0d0d0 75%)", backgroundSize: "200% 100%", animationName: "skeleton-shine", animationDuration: "1.5s", animationTimingFunction: "linear", animationIterationCount: "infinite", }} /> )} {/* Image */} {data.albumImageUrl && ( <img src={data.albumImageUrl} alt={`${data.title} album art`} onLoad={() => setImgLoaded(true)} style={{ width: albumSize, height: albumSize, objectFit: "cover", display: "block", opacity: imgLoaded ? 1 : 0, animationName: isCD ? "cd-spin" : "none", animationDuration: "5s", animationTimingFunction: "linear", animationIterationCount: "infinite", }} /> )} {/* CD center hole */} {isCD && imgLoaded && ( <div style={{ position: "absolute", top: "50%", left: "50%", transform: "translate(-50%, -50%)", width: albumSize * 0.2, height: albumSize * 0.2, borderRadius: "50%", background: "white", boxShadow: "inset 0 0 3px rgba(0,0,0,0.25)", pointerEvents: "none", }} /> )} </div> )} {/* Text column */} <div style={{ display: "flex", flexDirection: "column", gap: labelGap, overflow: "hidden", flex: 1, minWidth: 0, }} > {/* Now playing label */} <span style={{ fontSize: labelSize, color: label.color, opacity: 1, whiteSpace: "nowrap", textTransform: "uppercase", letterSpacing: "0.06em", ...label.font, }} > {label.text} </span> {/* Compact: inline marquee (disabled for silence) */} {layout === "compact" ? ( <div style={{ overflow: "hidden", width: "100%" }}> <div style={{ display: "inline-flex", alignItems: "center", whiteSpace: "nowrap", animationName: data.songUrl ? "marquee-scroll" : "none", animationDuration: `${marqueeDuration}s`, animationTimingFunction: "linear", animationIterationCount: "infinite", }} > {/* Duplicate for seamless loop */} {(data.songUrl ? [0, 1] : [0]).map((i) => ( <span key={i} style={{ display: "inline-flex", alignItems: "center", gap: innerGap, paddingRight: 15, }} > <span style={{ fontSize: titleSize, color: track.titleColor, ...track.font, }} > {trackTitle} </span> {hasArtist && ( <> <span style={{ fontSize: titleSize, color: track.titleColor, ...track.font, }} > {track.separator} </span> <span style={{ fontSize: artistSize, color: track.artistColor, ...track.font, }} > {data.artist} </span> </> )} </span> ))} </div> </div> ) : ( /* Spacious: inline, no marquee */ <div style={{ display: "flex", flexDirection: "row", alignItems: "center", gap: innerGap, overflow: "hidden", }} > <span style={{ fontSize: titleSize, color: track.titleColor, whiteSpace: "nowrap", overflow: "hidden", textOverflow: "ellipsis", flexShrink: 0, ...track.font, }} > {trackTitle} </span> {hasArtist && ( <> <span style={{ fontSize: titleSize, color: track.titleColor, flexShrink: 0, ...track.font, }} > {track.separator} </span> <span style={{ fontSize: artistSize, color: track.artistColor, whiteSpace: "nowrap", overflow: "hidden", textOverflow: "ellipsis", ...track.font, }} > {data.artist} </span> </> )} </div> )} </div> </div> </> ) } SpotifyNowPlaying.defaultProps = { apiUrl: "https://[yourdomain]/api/now-playing", layout: "compact", label: { text: "Now Playing", color: "#000000", font: {}, }, track: { titleColor: "#000000", artistColor: "#000000", separator: "—", font: {}, }, albumArt: { show: true, style: "square", }, content: { silenceText: "I'm currently enjoying the silence", loadingText: "Loading...", }, } addPropertyControls(SpotifyNowPlaying, { apiUrl: { type: ControlType.String, title: "API URL", }, layout: { type: ControlType.Enum, title: "Layout", options: ["compact", "spacious"], optionTitles: ["Compact", "Spacious"], }, albumArt: { type: ControlType.Object, title: "Album Art", controls: { show: { type: ControlType.Boolean, title: "Show", }, style: { type: ControlType.Enum, title: "Style", options: ["square", "cd"], optionTitles: ["Square", "Spinning CD"], hidden: (props: { show: boolean }) => !props.show, }, }, }, label: { type: ControlType.Object, title: "Label", controls: { text: { type: ControlType.String, title: "Text", }, color: { type: ControlType.Color, title: "Color", }, font: { type: ControlType.Font, title: "Font", controls: "extended", }, }, }, track: { type: ControlType.Object, title: "Track", controls: { titleColor: { type: ControlType.Color, title: "Title Color", }, artistColor: { type: ControlType.Color, title: "Artist Color", }, separator: { type: ControlType.String, title: "Separator", }, font: { type: ControlType.Font, title: "Font", controls: "extended", }, }, }, content: { type: ControlType.Object, title: "Content", controls: { silenceText: { type: ControlType.String, title: "Silence Text", }, loadingText: { type: ControlType.String, title: "Loading Text", }, }, }, })

import { addPropertyControls, ControlType } from "framer" import { useEffect, useState } from "react" interface NowPlayingData { albumImageUrl: string title: string artist: string songUrl: string loaded: boolean } type Layout = "compact" | "spacious" type AlbumStyle = "square" | "cd" interface Props { apiUrl: string layout: Layout label: { text: string color: string font: object } track: { titleColor: string artistColor: string separator: string font: object } albumArt: { show: boolean style: AlbumStyle } content: { silenceText: string loadingText: string } } const layoutConfig = { compact: { albumSize: 32, albumRadius: 4, labelSize: 10, titleSize: 12, artistSize: 12, gap: 8, innerGap: 5, labelGap: 2, }, spacious: { albumSize: 64, albumRadius: 10, labelSize: 12, titleSize: 20, artistSize: 20, gap: 16, innerGap: 6, labelGap: 4, }, } const ANIMATIONS = ` @keyframes marquee-scroll { 0% { transform: translateX(0); } 100% { transform: translateX(-50%); } } @keyframes cd-spin { from { transform: rotate(0deg); } to { transform: rotate(360deg); } } @keyframes skeleton-shine { 0% { background-position: -200% 0; } 100% { background-position: 200% 0; } } ` export default function SpotifyNowPlaying({ apiUrl, layout, label, track, albumArt, content, }: Props) { const [imgLoaded, setImgLoaded] = useState(false) const [data, setData] = useState<NowPlayingData>({ albumImageUrl: "", title: "", artist: "", songUrl: "", loaded: false, }) useEffect(() => { fetch(apiUrl) .then((res) => res.json()) .then((json) => { const hasTrackData = json.isPlaying && json.title && json.songUrl if (hasTrackData) { setData({ albumImageUrl: json.albumImageUrl, title: json.title, artist: json.artist, songUrl: json.songUrl, loaded: true, }) } else { setData({ albumImageUrl: "", title: content.silenceText, artist: "", songUrl: "", loaded: true, }) } }) .catch(() => { setData({ albumImageUrl: "", title: "Couldn't load now playing", artist: "", songUrl: "", loaded: true, }) }) }, [apiUrl, content.silenceText]) useEffect(() => { setImgLoaded(false) }, [data.albumImageUrl]) const { albumSize, albumRadius, labelSize, titleSize, artistSize, gap, innerGap, labelGap, } = layoutConfig[layout] ?? layoutConfig.compact const trackTitle = data.loaded ? data.title : content.loadingText const hasArtist = data.title.trim() !== "" && data.artist.trim() !== "" // Speed: roughly 1s per 4 chars, min 6s const marqueeDuration = Math.max( 6, (trackTitle.length + (data.artist?.length ?? 0)) * 0.25 ) const handleClick = () => { if (data.songUrl) window.open(data.songUrl, "_blank") } const isCD = albumArt.style === "cd" const imgBorderRadius = isCD ? "50%" : albumRadius // Show album art area only while loading (skeleton) or when a song is playing const showAlbumArea = albumArt.show && (!data.loaded || !!data.albumImageUrl) return ( <> <style>{ANIMATIONS}</style> <div onClick={handleClick} style={{ display: "flex", flexDirection: "row", alignItems: "center", gap, cursor: data.songUrl ? "pointer" : "default", width: "100%", height: "100%", overflow: "hidden", }} > {/* Album art */} {showAlbumArea && ( <div style={{ position: "relative", width: albumSize, height: albumSize, flexShrink: 0, borderRadius: imgBorderRadius, overflow: "hidden", }} > {/* Skeleton — only while fetching or image not yet loaded */} {(!data.loaded || !imgLoaded) && ( <div style={{ position: "absolute", inset: 0, background: "linear-gradient(90deg, #d0d0d0 25%, #e8e8e8 50%, #d0d0d0 75%)", backgroundSize: "200% 100%", animationName: "skeleton-shine", animationDuration: "1.5s", animationTimingFunction: "linear", animationIterationCount: "infinite", }} /> )} {/* Image */} {data.albumImageUrl && ( <img src={data.albumImageUrl} alt={`${data.title} album art`} onLoad={() => setImgLoaded(true)} style={{ width: albumSize, height: albumSize, objectFit: "cover", display: "block", opacity: imgLoaded ? 1 : 0, animationName: isCD ? "cd-spin" : "none", animationDuration: "5s", animationTimingFunction: "linear", animationIterationCount: "infinite", }} /> )} {/* CD center hole */} {isCD && imgLoaded && ( <div style={{ position: "absolute", top: "50%", left: "50%", transform: "translate(-50%, -50%)", width: albumSize * 0.2, height: albumSize * 0.2, borderRadius: "50%", background: "white", boxShadow: "inset 0 0 3px rgba(0,0,0,0.25)", pointerEvents: "none", }} /> )} </div> )} {/* Text column */} <div style={{ display: "flex", flexDirection: "column", gap: labelGap, overflow: "hidden", flex: 1, minWidth: 0, }} > {/* Now playing label */} <span style={{ fontSize: labelSize, color: label.color, opacity: 1, whiteSpace: "nowrap", textTransform: "uppercase", letterSpacing: "0.06em", ...label.font, }} > {label.text} </span> {/* Compact: inline marquee (disabled for silence) */} {layout === "compact" ? ( <div style={{ overflow: "hidden", width: "100%" }}> <div style={{ display: "inline-flex", alignItems: "center", whiteSpace: "nowrap", animationName: data.songUrl ? "marquee-scroll" : "none", animationDuration: `${marqueeDuration}s`, animationTimingFunction: "linear", animationIterationCount: "infinite", }} > {/* Duplicate for seamless loop */} {(data.songUrl ? [0, 1] : [0]).map((i) => ( <span key={i} style={{ display: "inline-flex", alignItems: "center", gap: innerGap, paddingRight: 15, }} > <span style={{ fontSize: titleSize, color: track.titleColor, ...track.font, }} > {trackTitle} </span> {hasArtist && ( <> <span style={{ fontSize: titleSize, color: track.titleColor, ...track.font, }} > {track.separator} </span> <span style={{ fontSize: artistSize, color: track.artistColor, ...track.font, }} > {data.artist} </span> </> )} </span> ))} </div> </div> ) : ( /* Spacious: inline, no marquee */ <div style={{ display: "flex", flexDirection: "row", alignItems: "center", gap: innerGap, overflow: "hidden", }} > <span style={{ fontSize: titleSize, color: track.titleColor, whiteSpace: "nowrap", overflow: "hidden", textOverflow: "ellipsis", flexShrink: 0, ...track.font, }} > {trackTitle} </span> {hasArtist && ( <> <span style={{ fontSize: titleSize, color: track.titleColor, flexShrink: 0, ...track.font, }} > {track.separator} </span> <span style={{ fontSize: artistSize, color: track.artistColor, whiteSpace: "nowrap", overflow: "hidden", textOverflow: "ellipsis", ...track.font, }} > {data.artist} </span> </> )} </div> )} </div> </div> </> ) } SpotifyNowPlaying.defaultProps = { apiUrl: "https://[yourdomain]/api/now-playing", layout: "compact", label: { text: "Now Playing", color: "#000000", font: {}, }, track: { titleColor: "#000000", artistColor: "#000000", separator: "—", font: {}, }, albumArt: { show: true, style: "square", }, content: { silenceText: "I'm currently enjoying the silence", loadingText: "Loading...", }, } addPropertyControls(SpotifyNowPlaying, { apiUrl: { type: ControlType.String, title: "API URL", }, layout: { type: ControlType.Enum, title: "Layout", options: ["compact", "spacious"], optionTitles: ["Compact", "Spacious"], }, albumArt: { type: ControlType.Object, title: "Album Art", controls: { show: { type: ControlType.Boolean, title: "Show", }, style: { type: ControlType.Enum, title: "Style", options: ["square", "cd"], optionTitles: ["Square", "Spinning CD"], hidden: (props: { show: boolean }) => !props.show, }, }, }, label: { type: ControlType.Object, title: "Label", controls: { text: { type: ControlType.String, title: "Text", }, color: { type: ControlType.Color, title: "Color", }, font: { type: ControlType.Font, title: "Font", controls: "extended", }, }, }, track: { type: ControlType.Object, title: "Track", controls: { titleColor: { type: ControlType.Color, title: "Title Color", }, artistColor: { type: ControlType.Color, title: "Artist Color", }, separator: { type: ControlType.String, title: "Separator", }, font: { type: ControlType.Font, title: "Font", controls: "extended", }, }, }, content: { type: ControlType.Object, title: "Content", controls: { silenceText: { type: ControlType.String, title: "Silence Text", }, loadingText: { type: ControlType.String, title: "Loading Text", }, }, }, })

The serverless endpoint on Next JS.

// nowPlaying.ts import fetch from "node-fetch"; import { getAccessToken } from "./spotify"; const NOW_PLAYING_URL = `https://api.spotify.com/v1/me/player/currently-playing`; const getNowPlaying = async (): Promise<any> => { const accessTokenData = await getAccessToken(); const accessToken = accessTokenData.access_token; if (!accessToken) { throw new Error("Failed to get access token"); } const response = await fetch(NOW_PLAYING_URL, { headers: { Authorization: `Bearer ${accessToken}`, }, }); if (!response.ok) { throw new Error("Failed to fetch now playing"); } return response.json(); }; export { getNowPlaying }; // spotify.ts import axios from "axios"; const CLIENT_ID = process.env.SPOTIFY_CLIENT_ID as string; const CLIENT_SECRET = process.env.SPOTIFY_CLIENT_SECRET as string; const REDIRECT_URI = process.env.SPOTIFY_REDIRECT_URI as string; const SPOTIFY_TOKEN_ENDPOINT = "https://accounts.spotify.com/api/token"; const getSpotifyAuthUrl = (): string => { return `https://accounts.spotify.com/authorize?client_id=${CLIENT_ID}&response_type=code&redirect_uri=${REDIRECT_URI}&scope=user-read-currently-playing`; }; const REFRESH_TOKEN = process.env.SPOTIFY_REFRESH_TOKEN as string; const getAccessToken = async (): Promise<any> => { const body = new URLSearchParams(); body.append("grant_type", "refresh_token"); body.append("refresh_token", REFRESH_TOKEN); try { const response = await axios.post(SPOTIFY_TOKEN_ENDPOINT, body, { headers: { "Content-Type": "application/x-www-form-urlencoded", Authorization: `Basic ${Buffer.from( `${CLIENT_ID}:${CLIENT_SECRET}` ).toString("base64")}`, }, }); return response.data; } catch (error) { console.error("Error getting access token:", error); throw error; } }; export { getSpotifyAuthUrl, getAccessToken };

// nowPlaying.ts import fetch from "node-fetch"; import { getAccessToken } from "./spotify"; const NOW_PLAYING_URL = `https://api.spotify.com/v1/me/player/currently-playing`; const getNowPlaying = async (): Promise<any> => { const accessTokenData = await getAccessToken(); const accessToken = accessTokenData.access_token; if (!accessToken) { throw new Error("Failed to get access token"); } const response = await fetch(NOW_PLAYING_URL, { headers: { Authorization: `Bearer ${accessToken}`, }, }); if (!response.ok) { throw new Error("Failed to fetch now playing"); } return response.json(); }; export { getNowPlaying }; // spotify.ts import axios from "axios"; const CLIENT_ID = process.env.SPOTIFY_CLIENT_ID as string; const CLIENT_SECRET = process.env.SPOTIFY_CLIENT_SECRET as string; const REDIRECT_URI = process.env.SPOTIFY_REDIRECT_URI as string; const SPOTIFY_TOKEN_ENDPOINT = "https://accounts.spotify.com/api/token"; const getSpotifyAuthUrl = (): string => { return `https://accounts.spotify.com/authorize?client_id=${CLIENT_ID}&response_type=code&redirect_uri=${REDIRECT_URI}&scope=user-read-currently-playing`; }; const REFRESH_TOKEN = process.env.SPOTIFY_REFRESH_TOKEN as string; const getAccessToken = async (): Promise<any> => { const body = new URLSearchParams(); body.append("grant_type", "refresh_token"); body.append("refresh_token", REFRESH_TOKEN); try { const response = await axios.post(SPOTIFY_TOKEN_ENDPOINT, body, { headers: { "Content-Type": "application/x-www-form-urlencoded", Authorization: `Basic ${Buffer.from( `${CLIENT_ID}:${CLIENT_SECRET}` ).toString("base64")}`, }, }); return response.data; } catch (error) { console.error("Error getting access token:", error); throw error; } }; export { getSpotifyAuthUrl, getAccessToken };

// nowPlaying.ts import fetch from "node-fetch"; import { getAccessToken } from "./spotify"; const NOW_PLAYING_URL = `https://api.spotify.com/v1/me/player/currently-playing`; const getNowPlaying = async (): Promise<any> => { const accessTokenData = await getAccessToken(); const accessToken = accessTokenData.access_token; if (!accessToken) { throw new Error("Failed to get access token"); } const response = await fetch(NOW_PLAYING_URL, { headers: { Authorization: `Bearer ${accessToken}`, }, }); if (!response.ok) { throw new Error("Failed to fetch now playing"); } return response.json(); }; export { getNowPlaying }; // spotify.ts import axios from "axios"; const CLIENT_ID = process.env.SPOTIFY_CLIENT_ID as string; const CLIENT_SECRET = process.env.SPOTIFY_CLIENT_SECRET as string; const REDIRECT_URI = process.env.SPOTIFY_REDIRECT_URI as string; const SPOTIFY_TOKEN_ENDPOINT = "https://accounts.spotify.com/api/token"; const getSpotifyAuthUrl = (): string => { return `https://accounts.spotify.com/authorize?client_id=${CLIENT_ID}&response_type=code&redirect_uri=${REDIRECT_URI}&scope=user-read-currently-playing`; }; const REFRESH_TOKEN = process.env.SPOTIFY_REFRESH_TOKEN as string; const getAccessToken = async (): Promise<any> => { const body = new URLSearchParams(); body.append("grant_type", "refresh_token"); body.append("refresh_token", REFRESH_TOKEN); try { const response = await axios.post(SPOTIFY_TOKEN_ENDPOINT, body, { headers: { "Content-Type": "application/x-www-form-urlencoded", Authorization: `Basic ${Buffer.from( `${CLIENT_ID}:${CLIENT_SECRET}` ).toString("base64")}`, }, }); return response.data; } catch (error) { console.error("Error getting access token:", error); throw error; } }; export { getSpotifyAuthUrl, getAccessToken };

I’m excited to explore more ways to make my personal website even more personal by connecting it to APIs so it feels dynamic. Next, I want to do the same for other products I use daily, like Claude Code stats.

What would you bring into your personal website?

Pomodoro Sand Timer

The Pomodoro Technique is simple and practical: 25 minutes of focused work, followed by 5 minutes of rest. A structured work–pause–repeat rhythm that helps you commit to focused time and move tasks forward.

What never felt quite right to me was how it shows up in both the interaction and the interface.

You have to tap every time. Start, stop, restart. Each session begins with a deliberate action before you can actually focus. Repeated throughout the day, that small trigger becomes friction. And once it starts, the dominant visual is a shrinking number. The screen centers the countdown. Instead of feeling contained within a block of focus, it can feel like you’re watching time being consumed.

So…

What if the buttons were removed?

What if it didn’t show the timer?

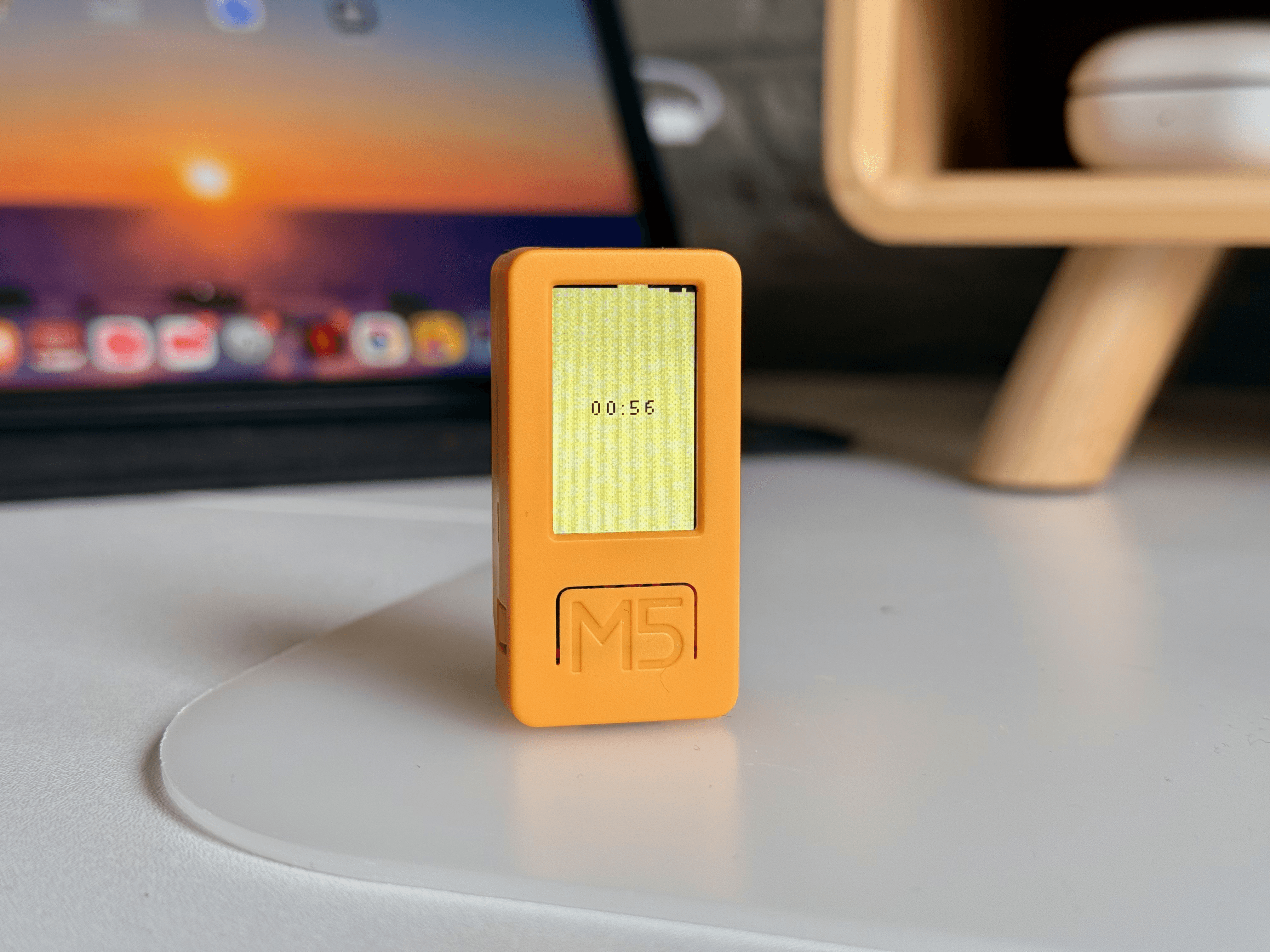

Here I'm exploring an idea with an M5StickC Plus 2. It’s small and self-contained, so the screen is limited. Whatever appears on it has to be deliberate. There’s no space for layered controls or secondary information.

It also has built-in motion sensors that detect orientation and movement. That means interaction doesn’t have to depend on tapping. The device responds to how it’s positioned. Upright, flat, flipped. The interaction becomes spatial instead of purely screen-based.

The Concept

Choose 25 or 50 minutes.

Stand the device upright, and sand begins to fall. The device behaves like a sandglass.

Grain by grain, one half empties while the other slowly fills. Under the surface, the sand follows simple gravity and collision logic.

The numeric timer only appears in the last three minutes. I didn’t want precision to dominate the entire session. Early on, the goal is immersion. As the session nears its end, I think showing the remaining time can support closure and help you wrap up or prepare to pause.

Interaction Model

From an interaction perspective, this became an exploration of digital and physical alignment. The interface is digital, but the interaction is physical. I wanted the behavior to work the way a sandglass works. When the device stands upright, sand falls. When it lies flat, the flow stops. When it’s flipped, the cycle begins again. The orientation of the object defines the state of the system. There are no buttons cycling through modes and no extra UI layers explaining what’s happening. The physical gesture directly controls the digital simulation.

Reflection

After spending some time with it, a few things stood out to me.

Representation shapes experience

The structure of Pomodoro didn’t change, only the interface did. Numbers felt rigid to me. They constantly reminded me that time was ticking down. With sand, the timer fades into the background. It feels less like watching something disappear and more like watching something flow. The underlying system stays the same, but the emotional framing shifts completely.Physical intuition reduces friction

This experiment made me wonder whether physical intuition reduces friction. When a digital system behaves the way a physical object behaves, it requires less explanation. I don’t have to think about it as much.Feeling is a differentiator

The structure stayed the same. The logic stayed the same. The function stayed the same. But the experience was different. In a world where building functional products is easier than ever, function alone doesn’t make something stand out. Feeling does. This exploration became an exercise in designing for that.

Resources

You can get the code here: https://github.com/thebuddyman/m5-playground/blob/main/apps/pomodoro_sandglass_app.py

Falling sand mechanics are inspired by https://jason.today/falling-sand

Music used: reset, restart, focus - the cozy lofi

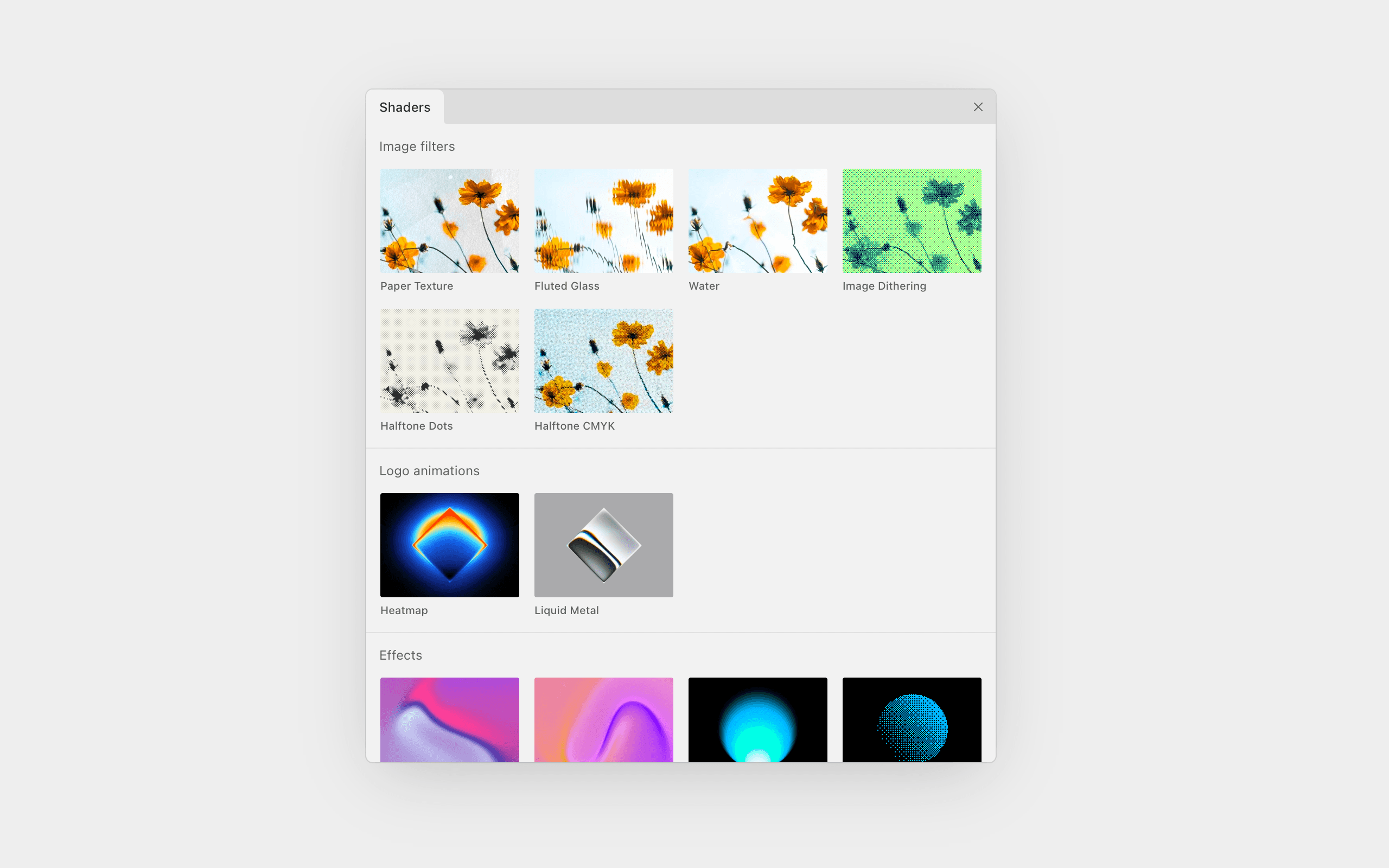

Paper Design App. Exploring Shader Tools

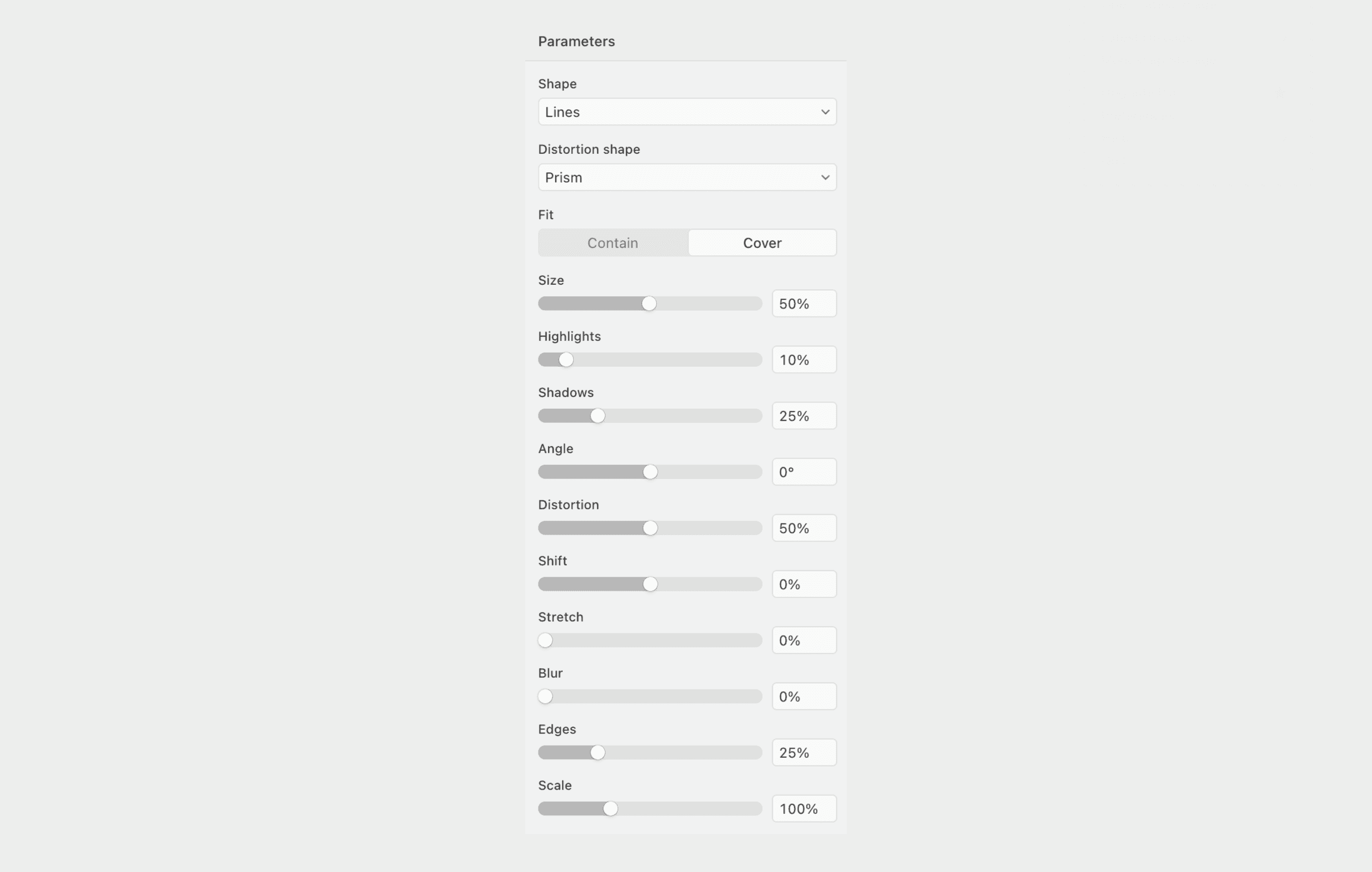

A new design tool on the block: Paper. It’s been on my radar, and recently I gave it a try for designing UIs. What caught my attention were the shaders. They’re basically filters, effects that you can apply to an image or generate based on provided parameters.

For example, I tried the Fluted Glass image filter and adjusted its parameters to explore different visual variations. What makes it terrific is its real-time processing.

And it’s animated. We can set the animation speed to 0% and download different variations of the image.

Here are a few results from a quick test. Feel free to download them if you’d like.

M5: First Encounter

It’s all started when I saw a tiny, saturated yellow rectangular device in this LinkedIn post. I was intrigued by this retro-looking piece. A creative technologist used it to create a pocket-sized AI assistant for his kid. It records a five-second audio clip, sends the query to OpenAI, then shows the answer on a screen.

A week later, voila, I ordered one myself from Ali Express. It took almost 10 days to arrive, though (Amazon is faster, but €15 more expensive). Still, my inner kid erupted with joy when I opened it. I couldn’t wait to tinker with it and try out a few ideas.

M5Stick, in a nutshell, it's a tiny programmable hardware platform for IoT prototyping, featuring built-in sensors, a display, input controls, and wireless connectivity. For example, you can prototype a wearable step counter or activity tracker.

There’s an innovation studio in the UK, that I’ve always been admired of their work to combine digital experience and physical product, called Special Project. Although exploring with the M5StickC isn’t really comparable to designing a full physical product. It’s just a different medium. But it lets me step outside the mobile/web app space and start thinking in new interaction formats. I’m hopeful this is the beginning of experimenting with something bigger beyond the M5StickC.

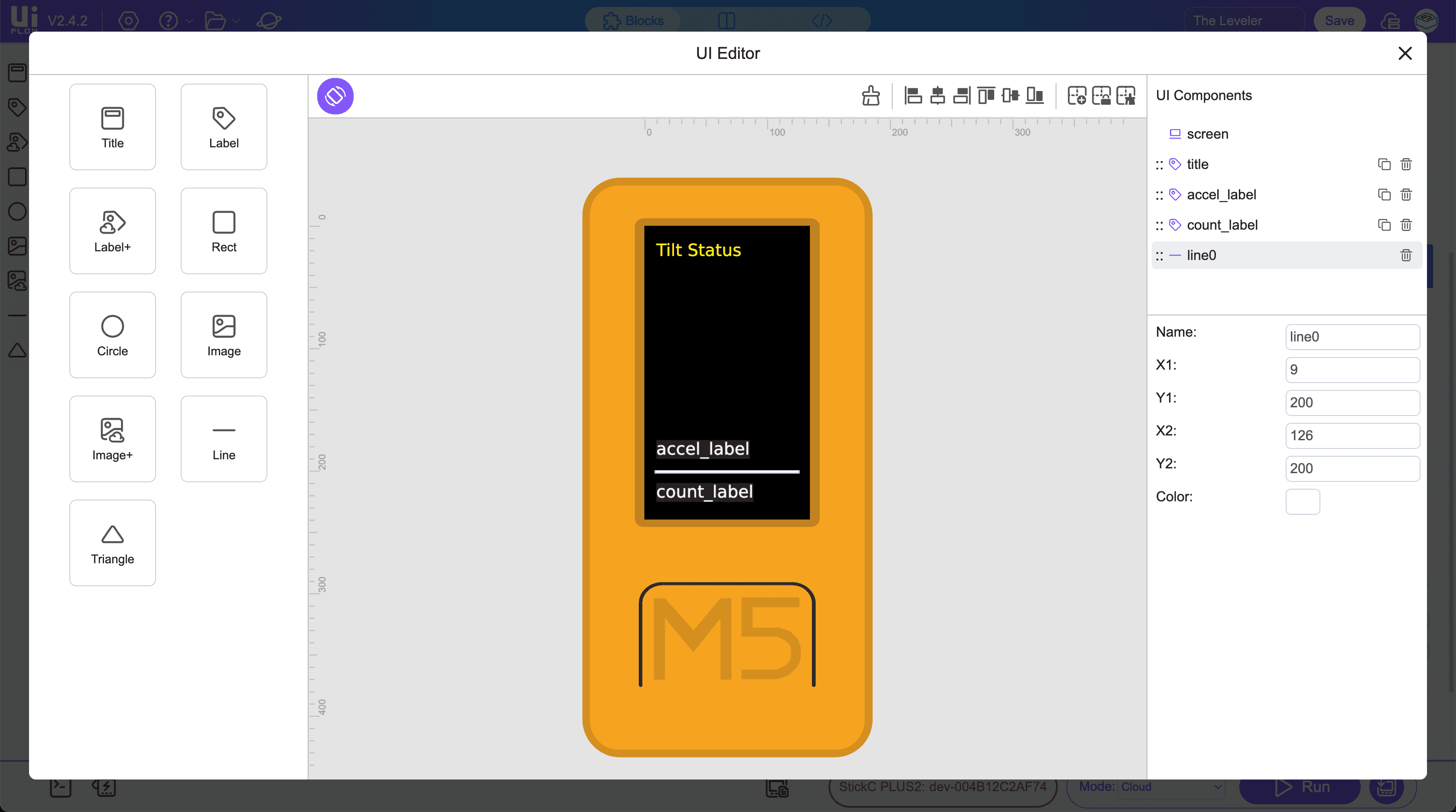

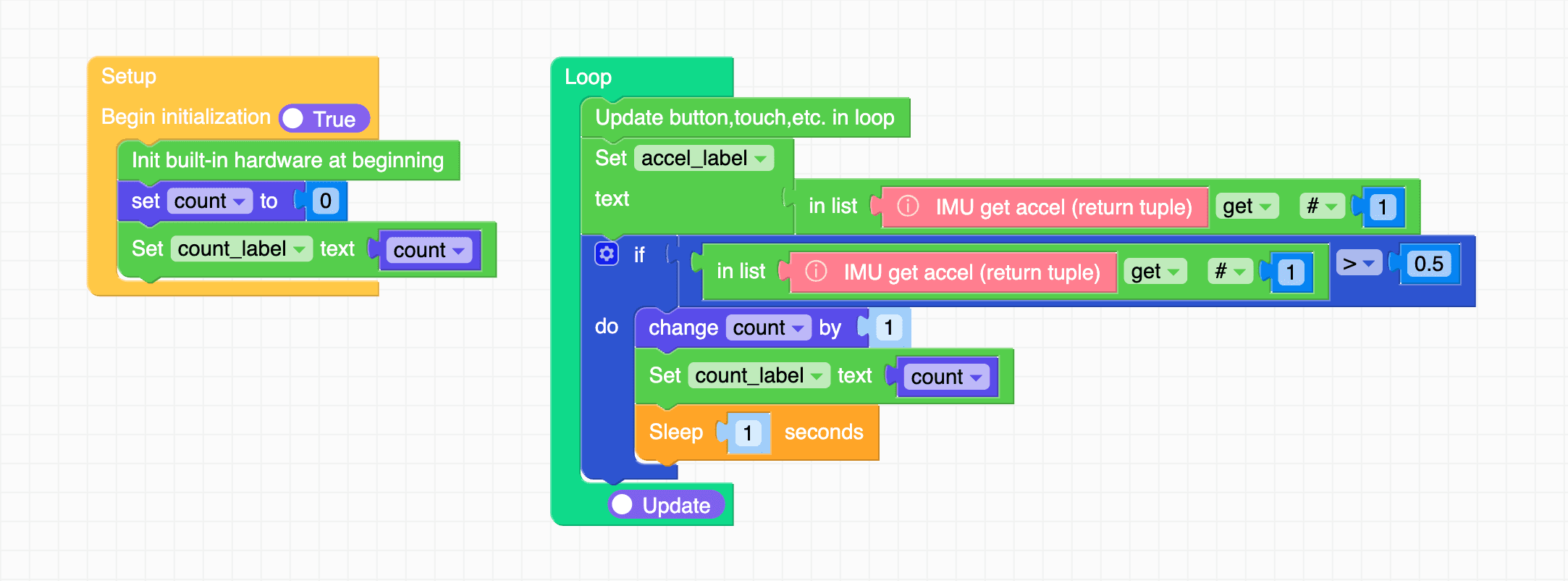

Testing an event trigger for the accelerometer in UIFlow2 drag-and-drop IDE

First things first, to get started I relied on Gemini to learn the basics, such as installing (burning) the firmware, connecting to WiFi, and finally getting started with programming. I chose UIFlow2 over Arduino, as UIFlow2 is easier to use since it combines a drag-and-drop IDE with coding. UIFlow2 code is based on Python. I never learned Python before. But I learned C and C++ back in uni. I understand the core programming concepts, but, mostly, I will rely on an LLM to help me generate the code.

I skipped the most common first lesson, the “Hello World” print. Instead, I used drag and drop to build a tilt status and test how to add visuals and handle an event. In this case, an event is triggered by the accelerometer, which detects when the device is tilted, and another event is triggered when the device tilt passes a certain value and adds to the counter.

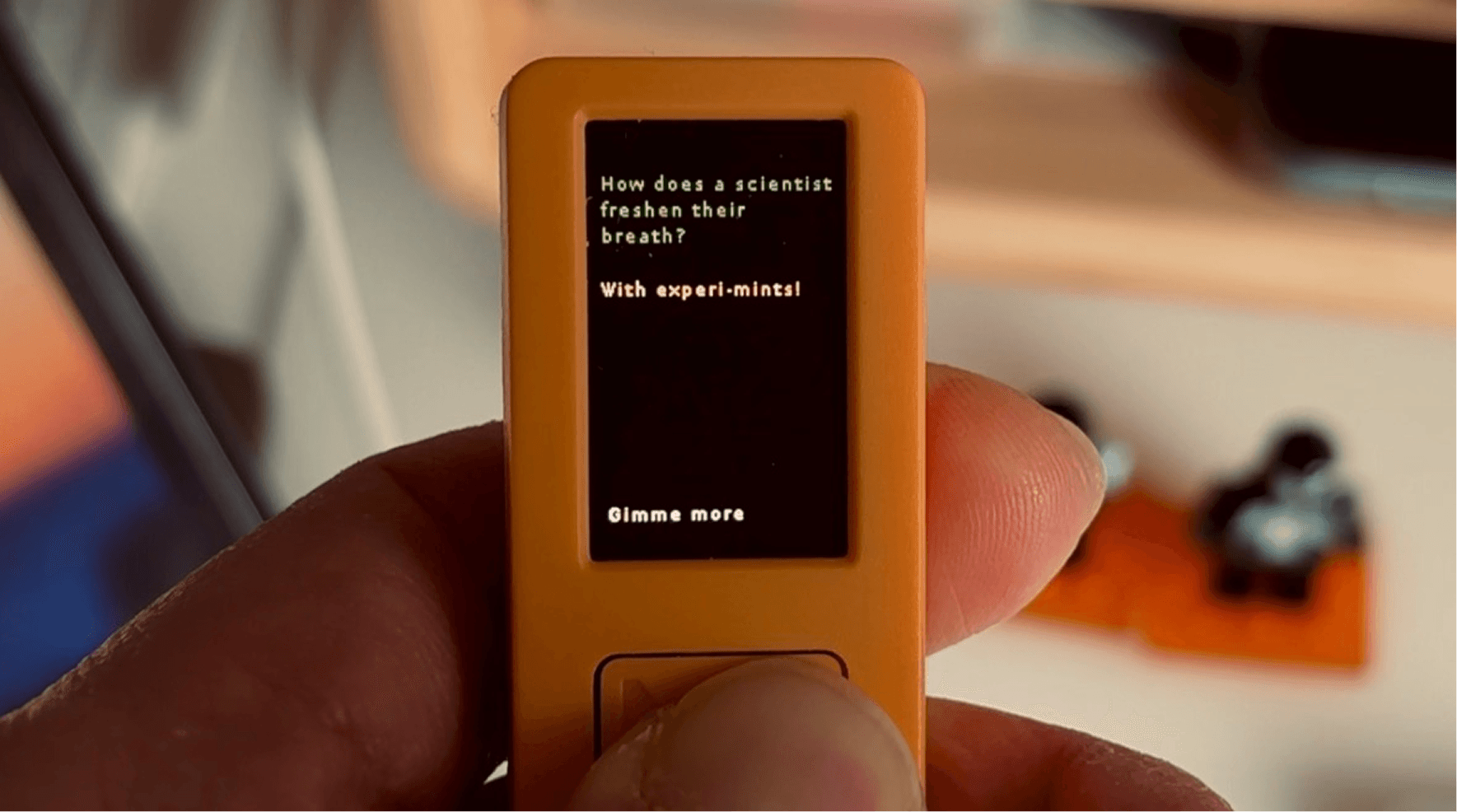

Next, I created the Gimme-a-Pun app. I connected to an API and used the code editor.

At the beginning, I was using the drag-and-drop IDE, but I couldn’t find the API integration block. I tried looking for documentation and prompting AI, but no luck. I course-corrected a couple of times as the AI hallucinated a lot with references to UIFlow version 1. I jumped into the code editor.

I had an idea: every time the main button is pressed, it gives you a pun. I used a public endpoint: https://official-joke-api.appspot.com/random_joke

I generated the code using Gemini Pro. Other than a text-wrapping issue, it functioned as intended. I couldn’t find a function I could call immediately. I saw an Arduino example, but I wanted to keep it in Python on UIFlow for now. I prompted a few more times and got a decent solution after giving it a reference from here: https://docs.python.org/3/library/textwrap.html

Check this out…

Some takeaways

Beyond being a hobby project, as a (digital) product designer it feels like a new playground to exercise:

Designing within tight constraints. A small screen, three buttons, no keyboard, no mouse. What can I create without them?

Thinking in a different medium. An opportunity to explore solutions beyond a mobile app. A what-if for a tool that does not live on a phone.

Mashing up my design and prototyping skills with code. It does not fully feel like coding since I am not writing much syntax, but it is not pure vibe coding either. It sits somewhere in between. Still, it’s promising. It gives me another tool I can reach for when something is better prototyped in code.

I’m looking forward to exploring more ideas.

If you’re also playing with this tiny tool, I’d love to hear from you.

The skills that become more essential in the age of AI

As part of Hyper Island’s Industry Research Project (IRP), I looked at how junior UX designers should adapt in an AI-accelerated industry, and which core abilities stay resilient as execution-heavy work gets automated and commoditised.

When I started the project, I focused on the disappearance of entry-level UX design jobs, an issue that felt increasingly relevant. The causes are mixed: a slow economy, global uncertainty, and although AI isn’t the main reason, it’s still eating up entry-level tasks or making them easier.

In design, it’s not the entry-level tasks that are most affected, but the early exploration ones: generating first drafts, alternative layouts, or draft UX copy. And it’s only getting better over time, relentlessly devaluing a human designer. A founder or business-minded person might think, why do we need to hire a human designer if we can just subscribe for 20 bucks? Or, we just need one conductor to orchestrate LLMs to design, code, and write copy.

Sitting with that reality pushed me into two different states of thinking.

One was defensive. It’s the mode where you look for what AI can’t do, what it can’t replace. I found things like curiosity, judgment, empathy, connecting ideas, systems thinking, and problem solving. Are these new skills? Not really. They’ve just been buried under conversations about pixel-perfect work, design systems, and cool micro-interactions.

The other was more opportunistic. It’s seeing AI in a more positive light, as a real opportunity to make our work better. When I interviewed leaders and designers who use AI, a recurring theme emerged:

“AI is just a tool.”

“It’s only as good as the person using it and that person’s depth in their domain.”

Good judgment complements the use of AI, and to develop good judgment, you need other skills: synthesis, critical thinking, systems thinking, and the ability to frame or reframe a lens.

Looking at it from both sides, the need to protect and the chance to grow point to the same thing: human skills. The very abilities that make us human are what make us more valuable and make the use of AI more effective.

Image credit: Luke Skywalker and C-3PO in Star Wars: A New Hope (1977) © Lucasfilm / Disney.

Is AI coming to UX design jobs? I scanned 554 UX design openings globally—here’s what I found

I was curious how often today’s UX job openings mention AI. It reminded me of almost 10 years ago, when design systems became a buzzword and “design system” started showing up in job requirements in all kinds of shapes.

Now the new buzz is AI. I scraped UX job openings on LinkedIn and Indeed (thanks to this repo). Alongside AI mentions, I also looked at how many roles are entry-level.

Updated from 576 to 554 after removing postings where missing cells were unintentionally counted.

Here’s what I found:

Global

~11.19% mention explicit AI requirements

~15.88% target entry-level (up to 2 years)

~1.62% mention AI requirements on entry-level roles

US (New York, SF, Seattle)

~9.26% mention explicit AI requirements

~15.03% target entry-level (up to 2 years)

~0.62% mention AI requirements on entry-level roles

Notes

Job postings were taken within a 30-day window starting August 8, totaling 554 postings across 12 cities.

Data came from LinkedIn and Indeed, focusing on UI/UX and product design roles.

Entry-level included postings that accept 0–1 year of experience.

Other platforms are missing, such as Glassdoor, Google, and company career pages.

Recurring themes in the listings

Curiosity/interest in AI: phrases like “curiosity about AI,” “openness to AI tools,” “deep curiosity,” and “enthusiasm for AI” appear 5–6 times.

AI tools (explicit mentions): direct references to tools (ChatGPT, MidJourney, Galileo AI, Uizard, etc.) appear 10+ times. Variants like “AI-driven tools,” “AI-assisted design tools,” and “AI prototyping tools” show up consistently.

AI-driven workflows / integration: mentions of integrating AI into workflows, “AI-driven workflows,” or “transforming processes with AI” appear 5–6 times.

AI product specialization: knowledge of AI product domains (conversational UX, personalization features, data-heavy AI/insights products) appears 3–4 times.

Three ways AI shows up in job requirements

AI literacy / awareness / curiosity: general understanding, openness, staying updated

AI tools fluency: hands-on use of AI-assisted design/prototyping platforms, plugins, workflows

AI product specialization: designing AI-powered features or products

Final Notes

I spoke with Roger Wong, who recently wrote about the design talent crisis and the vanishing bottom rung. He told me the evidence isn’t clear that AI is the reason entry-level roles are disappearing, but the bottom rung has been thinning for a while, and AI may be accelerating the squeeze.

He also suspects job postings don’t capture the full reality. In practice, there’s often a gap between recruiters and hiring managers, and recruiters may reuse older requirement templates. That makes AI in job requirements an imperfect signal. Even when it isn’t listed, the workflow is shifting, and the way designers work is already changing.

2026. Thomas Tjaja – UX Design & Consultancy

KvK: 98789988 · BTW: NL005353413B32 · Tilburg, Netherlands

2026. Thomas Tjaja – UX Design & Consultancy

KvK: 98789988 · BTW: NL005353413B32

Tilburg, Netherlands